- #Conda install xgboost for mac os how to#

- #Conda install xgboost for mac os code#

- #Conda install xgboost for mac os download#

Copy the configuration we intend to use to compile XGBoost into position.

#Conda install xgboost for mac os code#

First, check out the code repository from GitHub:

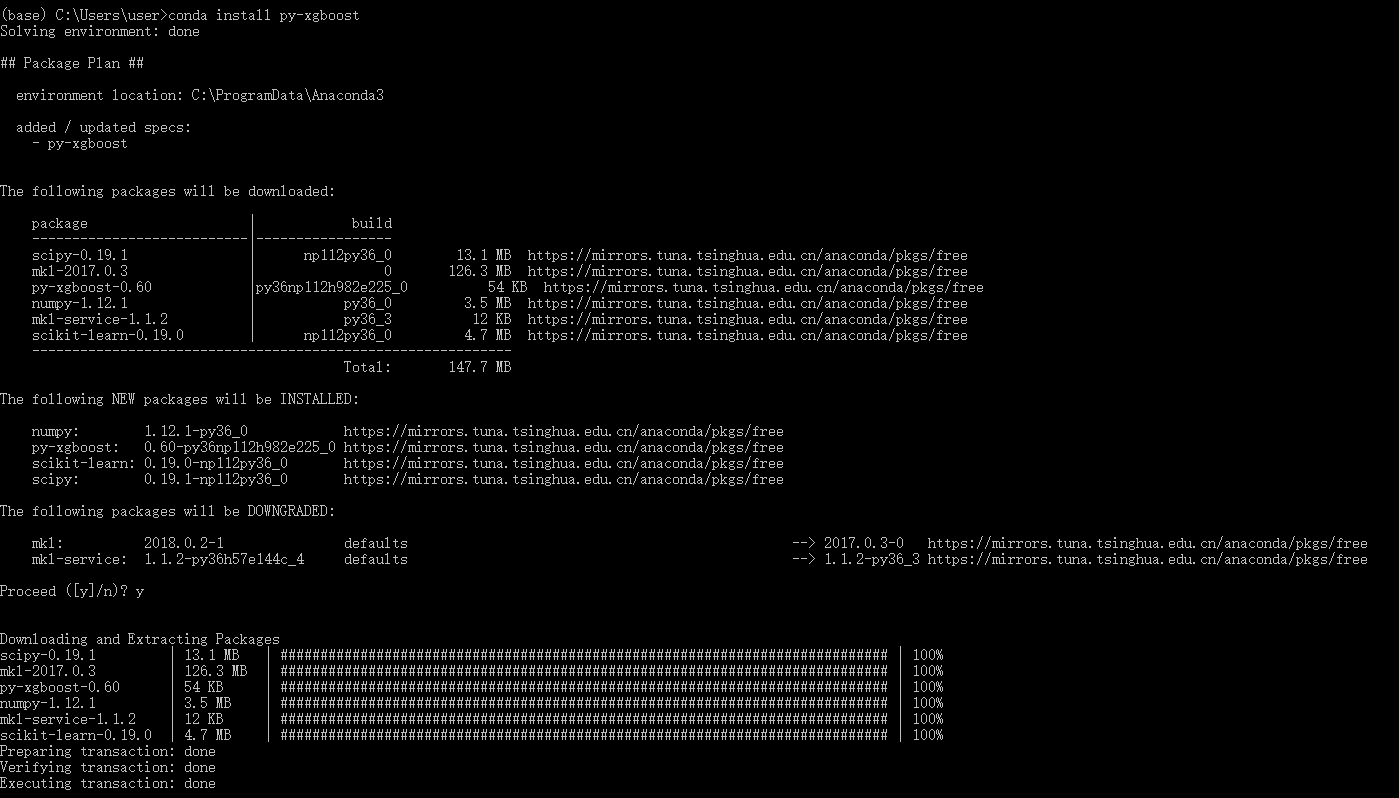

#Conda install xgboost for mac os download#

The next step is to download and compile XGBoost for your system. You should see the version of GCC printed for example: Confirm your GCC installation was successful as follows: After MacPorts and a working Python environment are installed, you can install and select GCC 7 as follows:

#Conda install xgboost for mac os how to#

> How to Install a Python 3 Environment on Mac OS X for Machine Learning and Deep Learning For help installing MacPorts and a Python environment step-by-step, see this tutorial: I recommend GCC 7 and Python 3.6 and I recommend installing these prerequisites using MacPorts. You need GCC and a Python environment installed in order to build and install XGBoost for Python. This tutorial was written and tested on macOS High Sierra (10.13.1). Note: I have used this procedure for years on a range of different macOS versions and it has not changed. This tutorial is divided into 3 parts they are:

The specific algorithm uses the second derivative of the loss function with respect to the tree to be sought. The approximate algorithm divides the weighted quantile of each dimension feature into buckets. The algorithm for constructing the tree includes an accurate algorithm and an approximate algorithm.Similar to the learning rate, after learning a tree, its weight is reduced, thereby reducing the role of the tree and increasing the learning space.The training speed is fast and the effect is good. When constructing each tree, the attributes are sampled. XGBoost supports column sampling, which is similar to random forest.XGBoost's objective function optimization uses the second derivative of the loss function with respect to the function to be sought, while GBDT only uses the first-order information.Penalizes the weight of leaf nodes, which is equivalent to adding a regular term to prevent overfitting.Compared with the general GBDT algorithm, XGBoost has the following advantages: It can be applied to tasks such as classification, regression, and sorting. XGBoost is a distributed and efficient gradient boosting algorithm based on decision tree (CART).